Group Members

Meet the researchers and evaluators behind this usability study.

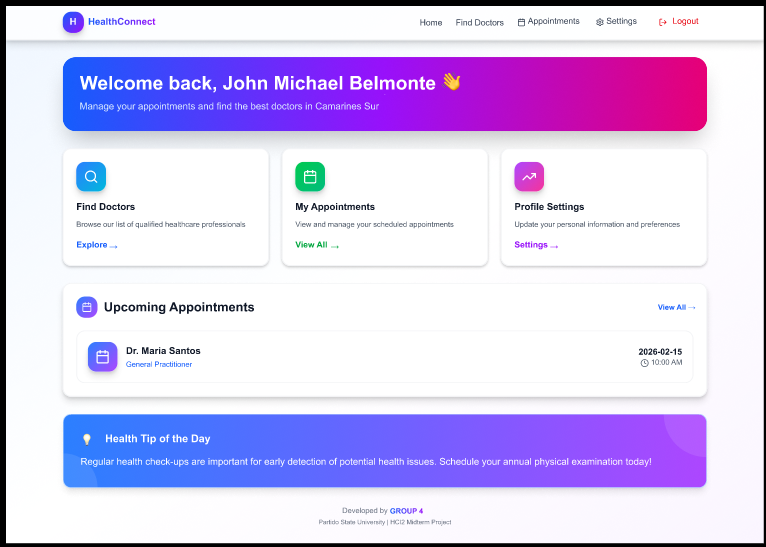

John Michael Belmonte

Compliance & Content Integrator

Jessica Vipinoso

Lead Frontend Developer & Visual Designer

Jomel Pahuwayan

Data Analyst & Research Lead

Earl Francis Salvo

UX Researcher & Multimedia Specialist